The exact reason for the first fatal crash with a self-driving car is still unclear, but the details that we know so far could indicate a problem area. The classification of objects.

Autonomous cars receive a steady stream of information from their sensors, which are most commonly a combination of multiple cameras, radar, ultrasound, and Lidars. Between one and four terabyte are generated per car per hour, which have to be processed and interpreted in real time.

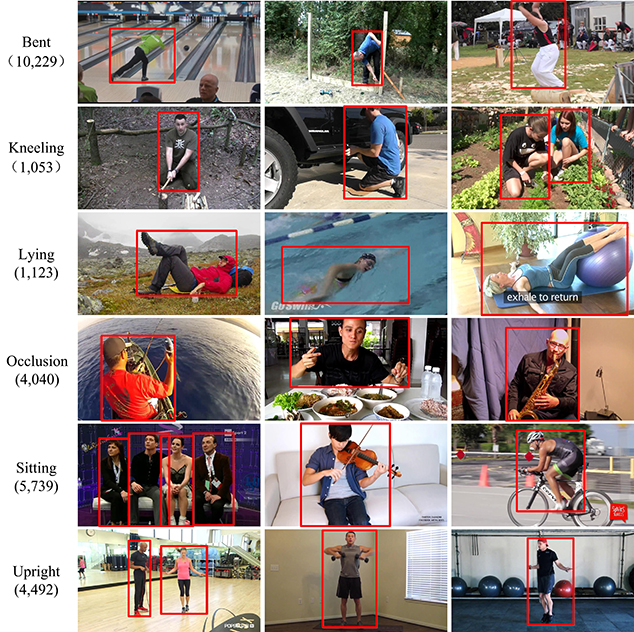

From those amounts of data the car needs to understand what objects surround it, what behaviors they typically show, in what context the behaviors are valid, and how to react to them. Humans as the most vulnerable objects get the highest focus. But it’s one thing to recognize a human shape. Already they come in a variety of shapes. Size is one variable (adults, children), poses another one. Humans not always walk up straight, sometimes they are bent over, they sit, they crawl, and show many other crazy poses. Still, autonomous cars must recognize humans, no matter how bent they pose.

And it doesn’t stop here. Humans often are packed with boxes or bags walking along roads. At Halloween many little humans run around in crazy costumes, making it difficult for cars to recognize what the heck tat thing in front of them is. Or they sit on bicycles, wheel chairs, Segways, or motor bikes. Each mobility device has a different movement dynamic. Motor bikes tend to pass between cars and are fast. Bicycles occasionally change directions very spontaneously.

Gestures from pedestrians are an important communication element with cars that have to be understand by autonomous vehicles.

The victim of the fatal accident in Tempe was, so much is known, a homeless woman pushing a bike packed with plastic bags across a four lane street. The form of the object could have been confusing, it may not have had such a combination in its database of classified objects.

The image above shows how different the shape of the person becomes when pushing a packed bike. Although companies are trying to test all variations first at their test tracks, not all objects and situations can be predicted. Even databases with annotated videos and images of humans are often just the beginning. That’s the reason why autonomous vehicles have to be tested outside of test tracks in the real world.

A reason why the car didn’t recognize the woman was that its form confused the car and led it to a false classification. The car could have misinterpreted the woman as a stationary object – confusing it maybe as a bush. And bushes normally don’t move. That may have led the car to the decision to not reduce speed. Even though the object seemed to have been in the middle of the street.

![]()

An additional factor could have been that the accident happened at 10pm local time, when it was dark and the car’s cameras may have had only limited resolution and a lower density of information. Also one or more sensor could have been defect or badly calibrated and did not deliver accurate data. Or the processors could have been too busy and had a time lag, classifying and reacting too late.

All that can lead to the effect that an object is either not detected, or that we have so called false positives. That happens when sensors report an object, but there is in fact none. A sudden light, a snow flake, or sensor noise falsely report that there is an object on the road, even if there is none. To prevent sudden maneuvers of the car, the processors assign probabilities to what the sensors detected. That should help avoid false positives.

Exactly that’s why it is important to test situations in simulators under different conditions. Waymo is rumored to test certain scenarios at up to 6,000 different variations. Light, weather, and other vehicles and objects on the street play an important role

To prevent future accidents like this, a better classification, higher resolution and more light sensitive cameras, as well as new sensors – read: infrared sensors – could help.

Of course this is all speculation and we have to wait for the final accident report.

This article has also been published in German.

Please use “crash” or “collision”, not accident. Police forces around the world are making this change to emphasize that collisions are the result of choices, not chance.

In the case of collisions involving autonomous vehicles it is even more important not to use “accident” since computers don’t have accidents, they behave according to their programming.

Thank you.

LikeLike

So much garbage speculation. We have the human backup driver in the car, specifically there to prevent this kind of accident. She said, the pedestrian came out of nowhere. The police chief saw the car video and she said the pedestrian came out of the shadows and no time to prevent the accident. The car itself did not have time to slow down before the accident. All information so far points to the pedestrian causing the accident. All the facts so far are against your preposterous speculation. We wait for the investigation to conclude.

LikeLike

This article is about why the car didn’t react by itself. Who is at fault doesnt matter for this discussion.

LikeLike

Regarding “She said, the pedestrian came out of nowhere.”: Yes, because she was not looking at the road until the last moment.

LikeLike

Where I see the issue is that you shouldn’t rely on classification. All you need is a basic functionality of not running things over, if that thing is a bush, a person or an alien does not matter. On top of that you can still adapt the behavior based on classification, but you should never choose to run something over just because it was classified as bush.

In this case the 64 beam lidar and the front radar should have seen the pedestrian as an object and the tracking algorithm should have predicted a collision. It is still possible that the pedestrian started moving just as the car came closer, in this case it is a latency issue, the car should have at least hit the brakes. All this indicates bad algorithm design on uber’s side to me.

In addition the behavior should be such that the car slows down in the presence of a pedestrian close to the planned path, nobody drives at 40 miles/h right past a pedestrian on the road (only in china and some other countries they do that).

LikeLike

To understand why the car did not stop, we need to trace what the program was doing during the 10 seconds before the accident. That information you do not know. The investigation will find out. Your speculation is not helping. The backup driver was looking down and up. Not just down. The backup driver was looking up before the pedestrian was hit. The driver did not see the pedestrian because the pedestrian came out of nowhere, as the external video shows. You classification theory on how the autonomous car program works is speculation and meaningless. Unless you worked on the Uber autonomous car program. If a person is standing next to the highway and there is no walkway crossing for pedestrians then cars do not need slow down. A car needs 2.8 second to stop from 38 mph. The car needs some time to compute that it needs to stop. So about 3 seconds are needed for the car to stop. If the car was less 3 seconds away from the time the pedestrian stepped on the highway then the car is not at fault. The investigation will tell us that. People are saying the backup driver was texting. Maybe she was looking at computer screens that monitor the car driving and the highway. Which is part of her job. So far the blame is all on the pedestrian. She was not looking where she was walking. A homeless person daydreaming. A lot on her mind. Or maybe not thinking at all. Walked right onto a highway. Tragic end.

LikeLike